Project Series

Predicting Tumor Malignancy with ML

-

Date: Feb 2023

-

Tools: Google Cloud Platform (GCP), HDFS, Spark ML

-

Language: Scala

-

Data Source: UCI Machine Learning Repository

-

Project Outcome: Scalable ML pipeline for tumor malignancy prediction in a distributed big data environment

Project Objective

This portfolio project demonstrates the development of a scalable machine learning pipeline for predicting tumor malignancy using a cancer dataset in a big data ecosystem powered by Apache Spark on Google Cloud Platform (GCP).

Dataset

-

Source: Breast Cancer Wisconsin (Original) dataset from UCI Machine Learning Repository

-

Original Size: 699 rows

-

Usable Size (after cleaning): 683 observations

-

Variables:

(1) Sample code number (id number)

(2) Clump Thickness (1-10)

(3) Uniformity of Cell Size (1- 10)

(4) Uniformity of Cell Shape (1-10)

(5) Marginal Adhesion (1-10)

(6) Single Epithelial Cell Size (1-10)

(7) Bare Nuclei (1-10)

(8) Bland Chromatin (1-10)

(9) Normal Nucleoli (1-10)

(10) Mitoses (1-10)

(11) Class (benign/malignant)

Development Steps

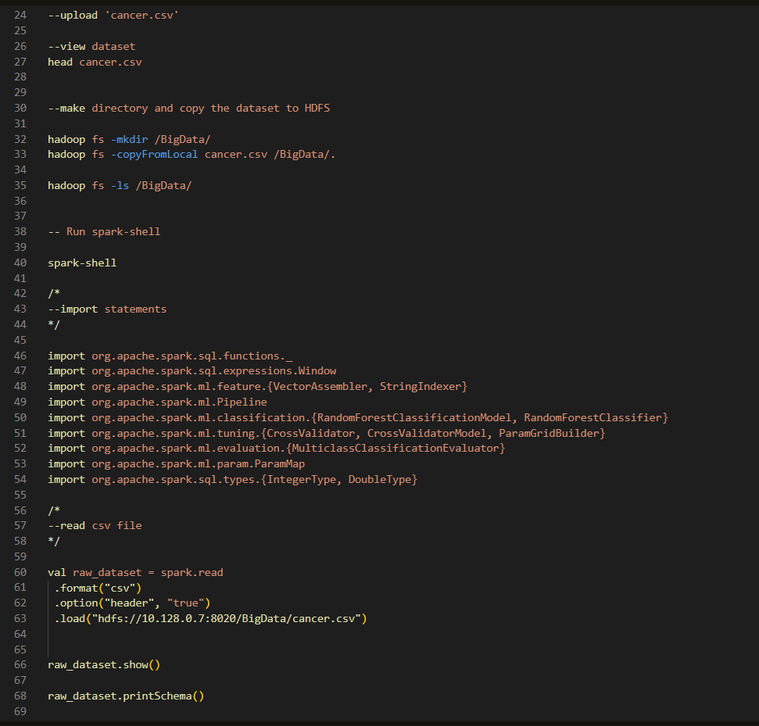

1. Load data into HDFS and read into Spark Shell

2. Inspect schema and summarize dataset in Spark

3. Split data into training and test sets (80/20)

4. Assemble features into a single vector

5. Create a Random Forest classifier with input/output columns

6. Build a pipeline combining feature assembly and model fitting

7. Evaluate model and tune hyperparameters

8. Perform cross-validation

9. Predict on unseen test data

10. Calculate model performance metrics

Code Snapshots

The following script snippets offer a snapshot of the techniques used across different stages of the project. They are not exhaustive but aim to illustrate the diversity of operations involved.

Conclusion

The Random Forest model achieved an accuracy of 0.97 on unseen data, highlighting its strong predictive capability. Although the training was conducted on a relatively small dataset, the use of a big data infrastructure ensures that the model and pipeline are inherently scalable. This framework can be readily adapted for similar datasets and extended to future experiments involving larger or more complex data.